Recently we’ve seen a rash of publicity coming out of New Orleans and from Leslie Jacobs about the New Orleans turnaround miracle. According to her press releases and op-ed pieces in the Time Picayune and Baton Rouge Advocate, New Orleans leads the both the state and nation in graduation rate. That would be some wonderful news . . . if it were true. I think this claim needs to be examined a little more closely and not just accepted as fact, and I will explain why.

For many years, New Orleans provided some of the worst data of all of Louisiana’s parishes, even before Katrina. I know this, because I was the one who had the thankless task of reviewing and pointing out obvious omissions – trying to get at least the outright ridiculous data fixed. Many of their schools failed to report discipline actions or attendance, or rather, some of their lowest performing schools were reporting perfect attendance, for every single student, and zero suspensions and expulsions, year after year. We had no audit or enforcement arm with any teeth at LDOE, and this parish was not the only one doing this, so this type of poor data was reluctantly tolerated. Most years, prior to Katrina, they had trouble sending in any data at all, and were always sending data down to the wire come submission time, forcing us to accept what they gave us or delay reporting anything for anyone.

After Katrina, in 2005, New Orleans was wiped out just as the 2005-2006 school year was beginning. Their data systems were under water, as were most of their hard copy student files, as were any residents unlucky enough to end up stranded in the city. This was a very stressful time for everyone, where everyone was forced to evacuate, and in some cases evacuate again after Hurricane Rita ripped another hole in Louisiana in western part of our state. During this time we had to start making data collections monthly and tracking where all these students were going. Many of them ended up in the other states, 48 of them plus DC if I recall correctly. Most of our evacuees from New Orleans ended up in Houston and Baton Rouge. Into this vast chaos came the charter schools, and the RSD, Recovery School District, was born.

Slowly the city was emptied of water and the reeking refuse of thousands of rotten refrigerators. Even the air quality in Baton Rouge, from all the mold spores filling the air from a rotting city 60 miles away, was quite poor (not that air quality in Baton Rouge with all of our chemical plants and cars is that great anyway, but it was definitely noticeable to allergy sufferers and asthmatics like myself.) Gangs were roaming the streets at night in New Orleans and in some places during the day, and the National Guard was patrolling the 9th ward. FEMA was ridiculed, and rightly so, for being largely ineffective and unorganized. Ray Nagin, the Mayor of New Orleans, was making strange speeches about losing his “Chocolate City” as it became apparent that darker skinned and less affluent residents were having a harder time returning to New Orleans than many paler hued ones. Most police officers and teachers were unable to return or report to work because their homes were destroyed and housing was very limited (all Orleans teachers were also actually fired and told they could apply for new spots, if they opened up, but many charter operators developed a taste for much less experienced and expensive TFA recruits) Many people had to commute to New Orleans on busses from Baton Rouge every day, and home every night. A number of my coworkers from the Louisiana Department of Education volunteered, or were volunteered, to supervise children in schools that were opening up. These “schools” were operating as not much more than glorified daycare facilities for the children that remained or were able to return. Not much learning happened in the 2005-2006 school year, but a number of my coworkers were physically assaulted and had their cars vandalized for their trouble. This data could not and should not be compared to anything and to report doing better than 2005 is a foolish and disingenuous claim to make.

It was into this chaos the New Orleans charter movement was ruthlessly spawned. People were afraid they had no other choice, and a lot of money eventually started flowing from federal recovery coffers. There were plenty of outstretched hands waiting to receive those funds.

The 2006-2007 school year was also largely lost. An assistant Superintendent named Robin Jarvis chose, or was chosen, to try leading the fledgling RSD. A vendor named Tyler-Munis was selected by RSD to collect and report their student data. This collection system was never implemented. Even today, LDE has no idea how many students came and went through RSD’s doors. RSD did not send any discipline or attendance data for any of its schools that year (schools were keeping sketchy records on spreadsheets, or hardcopy, or nothing.) The February collection resulted in RSD sending some students 10 or more times with different student ID numbers, sometimes at the same schools, sometimes as different ones, making it impossible to know how many students they had, or who had them, or when. RSD actually gave up and did not send any end of year data, so we had no information on graduates or dropouts, transfers in or out. This caused many other districts in the state to have dropouts they did not deserve, because the students that transferred to RSD never had their records transmitted by RSD to the state.

The Orleans Parish school system also reopened and their data collections and reporting went much smoother with a different incarnation of their previous vendor. Orleans Parish kept most of the high performing magnet schools, some of which were the best in the state or Nation pre-Katrina. The creation of the RSD system left them with the easiest students to serve, the more affluent and gifted ones, and RSD and the various charters were left with whoever was left over. Dozens of charters came and went over the next few years with varying degrees of compliance with submitting data and varying degrees of success in educating students. Although many independent charters did not submit everything they needed to, or were obviously submitting false data. RSD was in charge of ensuring these charter operators were complying with state laws and policy, but they did a piss poor job. RSD usually ignored our requests to investigate data issues of their own or of the charters they were supervising. It was during this time, 2006-2007 and 2007-2008 that charters started realizing they could be selective with their enrolments, or rather who they did not enroll. We noticed many of these operators were not enrolling very many special education students, claiming they did not have the personnel to address SPED student needs. This was true, this was by design, but this was not legal. These operators could and should have engaged the services of experienced Special Education teachers and necessary facilities, but those sorts of hires and purchases would have cut into profits (and CEO salaries for non-profits), so many charters did whatever they could to discourage and redirect these students to RSD.

In 2007-2008 Paul Vallas came to town. He brought with him a gaggle of cronies looking to make a quick buck, including his Right Hand Technology Man, Jim Flanagan. From the very first meeting with Flanagan it was obvious he already had a new vendor in mind, PowerSchool. He’d done business with them before, and even though his earlier implementation with them was a failure, he’d felt they had improved. (That was a big red flag to me. Flanagan actually relayed several projects he’d worked on in St Louis and other places, all of which were dismal failures by his own reckoning, yet somehow he felt compelled to tell us that, like failure was a pre-requisite to working in New Orleans.) I was trying to encourage my folks to pick a local Louisiana vendor name by us as JPAMS. They had an exemplary track record with us LDE folks, and their clients and already represented more than half the state. (PowerSchool did not even have one client in Louisiana at the time.) After reviewing an embarrassing RFP from PowerSchool and a great one from JPAMS we had to make our decision. Flanagan gave PowerSchool all 10s on his evaluation for every category; even though they told us they could only meet 1/3 of the RFP requirements, they could not meet our timeline, and they bid 2 to 3 times as much via some weird ala carte’ proposal. Still, I was told to make the selection close by my other team members, or PowerSchool would challenge the selection, so I went along, so in the end I got who I wanted.

It took most of 2007-2008 but eventually JPAMS got RSD mostly straightened out by supplying many of their own personnel to do much of the data entry work for RSD. It wasn’t until 2008-2009 that LDE was finally getting decent data.

This was about the same time we started collecting truancy data. There was quite a bit of variation in how districts were reporting this to us. I came up with a pretty strict mathematical description of how I wanted this calculated, but we had a few districts that told us they would not comply. New Orleans Parish was one of those. Apparently their superintendent did not want to report that indicator as defined, because it would make them look bad to the media. If I recall correctly New Orleans Parish School Board reported a 0.027% truancy rate while average rates ran around 5% – 10% for those doing it correctly – based on the definition at that time. I believe RSD had a rate of around 30-40% and some of the charters had rates as high as 70 or 80%.

Another neat little trick that was employed in years past is changing the exit codes for students who were listed on “preliminary drop out rosters” to exiting out-of-state or to a non-public school. No documentation is required for this change. We’ve had a few enterprising principals that instructed their staff to “fix” their dropout problems. Changing exit codes to statuses we can’t track – like exits to non-publics (who don’t share student level data with us) or other states (who also don’s share data with us) takes care of the “problem.” Only a few schools have been caught doing this in the past, they were very obvious about it, and it was years ago, before the reigns of John White and Paul Pastorek, when LDE actually did a little rudimentary data auditing (interestingly enough, we did this auditing before the data was as important as it is for school performance scores and tracking State Superintendent progress.)

These are just a few examples of data deception. I don’t want to bore you anymore with my overly elaborate data collection horror stories, but suffice it to say I will be impressed if New Orleans is really graduating as many quality graduates as they are claiming. I think it’s worth mentioning that since I posted my blog entries (one and two) on Louisiana’s bogus dropout rate I’ve had confirmation that districts in Louisiana have been rolling over their adult education students for the last 3 years keeping them from enrolling in grade 9 or becoming dropouts. Around 3 or 4 years ago an enterprising LEA found some language in the NCES (National Center for Education Statistics) dropout definition that permitted LEAs to not exclude students from dropout counts if they enrolled in an “LEA monitored” adult education program and were pursuing their GED. I argued against making this change because I felt it could easily be abused, and because very few of these students actually do earn GEDs. At most, all this change would do is shift dropouts to another year, if data was entered correctly.

I ran some historical reports on this data comparing the GED completers to students that became dropouts after dropping out of school to enroll in adult education programs. I found that of the 7 or 8 thousand students that did this, fewer than 500 obtain GEDS in 2 years. Coincidentally, the average decrease in dropouts over the past 3 or 4 years is about 8 thousand students. (Apparently all of our school districts realized they were monitoring their adult education programs working to obtain GEDs, and despite this careful monitoring, were unable to report when those students left those programs.) Students that exit school to an adult education program in 8th grade never enter a 9th grade cohort, and never “dropout” data-wise. They also never graduate and never end up in a denominator. If our graduation rate and enrollment is skyrocketing, why are our graduates remaining relatively flat or only showing modest gains?

Another nice little tidbit I think worth noting is that the author of these op-ed pieces is Leslie Jacobs, was also a member of BESE, the state board of education, when New Orleans went all takeover crazy. She is not exactly an unbiased source since a positive view obviously reflects well on her decision and negative report would not.

Still, it would be nice to verify this claim with even unreliable data, but the Louisiana Department of Education has made it a policy to deny most data requests, even simple ones. Just this past week the took down all their previously published historical data to make researching their claims that much more challenging.

To give you just one example of what most data requesters and researchers have to deal with. . . I recently was subpoenaed to testify about the existence of data that LDE claimed did not exist and claimed they could not provide a researcher. The problem is, not only did that data exist; I created it before I left. I was asked to provide it to a different researcher; friendly to LDE, on the condition they only write nice things about them. (I have a copy of the MOU in my possession that says the researcher can only release data and reports that LDE approves of.) I also gave that same student data to a lawyer working with the Jindal office for the BP lawsuit. But other than those two other groups, no one else . . . that I know of. Nevertheless, LDE refused to give data to the researcher that contacted me so I agreed to help.

When the LDE reps and their lawyer saw me in the courtroom, I guess they decided not to perjure themselves that day. Instead, they changed course, stipulated to the existence of the data, and then made the argument that FERPA did not allow them to provide that data, and that in any event, they were under no obligation to provide data to anyone other than those they chose to share it with . . . and they asked for a continuance to make this new argument. It’s been over a year since the initial request for basic enrollment, entry and exit data (that might indicate if New Orleans schools, and especially charters, have an inordinate number of out-of-state transfers for instance) for New Orleans schools, was made and many thousands of dollars in lawyer fees.

How long do you think it would take to get this data on graduates?

And if that’s not enough, LDE has made it a habit of excluding low performing schools from district composite reports so they can report continuous improvement. If those schools were included, RSD’s performance score would actually be declining according to Dr. Barbara Ferguson. As of the last count, 12 schools were excluded from the last calculation. I wonder how many schools might be missing in Leslie’s report. . .

Since LDE only provides summarized data or makes press releases announcing grandiose claims, but does not proffer any data to support those announcements and claims, I would recommend strongly against believing them. When I worked there, LDE actually produced and provided reports and data to anyone who asked – if we had the data and the work was not overly excessive. (Even then, the data had significant problems and limitations to its use.) Now LDE has very few people left in the data collection area qualified to review the data, or even understand it, and they have a superintendent who has determined the data released must only say positive things about his work, even if it means accepting obviously impossible claims or excluding schools from calculations and reports that don’t forward the narrative he is trying to craft. Until data is freely released and historical data is restored (that would reveal the existence of schools excluded in the future for instance) I would strongly recommend against believing anything they claim. If you could show your claims were legitimate, that you could put people like me in my place, wouldn’t you want to share that data with the world to prove your claims and prove your detractors wrong? What LDE has done instead is actually remove all previous data from their website, even data contained in previous press releases.

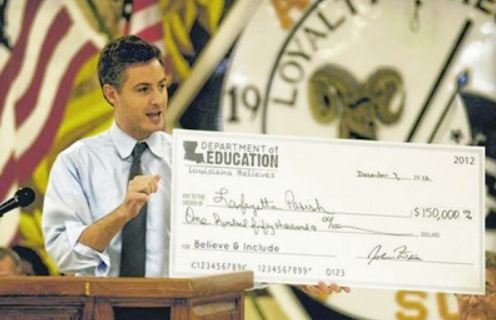

LDE and John White even renamed their website to www.louisianabelieves.com Does that sound like the act of a confident person, or the act of a desperate coward? They really hope you will just believe, but you do so not just at your peril but with the cost of our children’s futures at stake.

When was the last time a politician told you to just believe him on blind faith, and that turned out well for you?

John White definitely has a god complex, but Louisiana, you don’t have to worship him.